Chapter 1: The Fallacy of Volume and the fragmentation of Intent

For the better part of two decades, the digital marketing industry operated under a singular, largely unquestioned heuristic: more content equals more traffic. This additive philosophy drove the architecture of the modern web. Marketing teams were incentivized by volume, measuring success in words published per week and pages indexed per month. The logic was linear and seductive—if one article on "enterprise software" yields a thousand visitors, then ten articles must surely yield ten thousand.

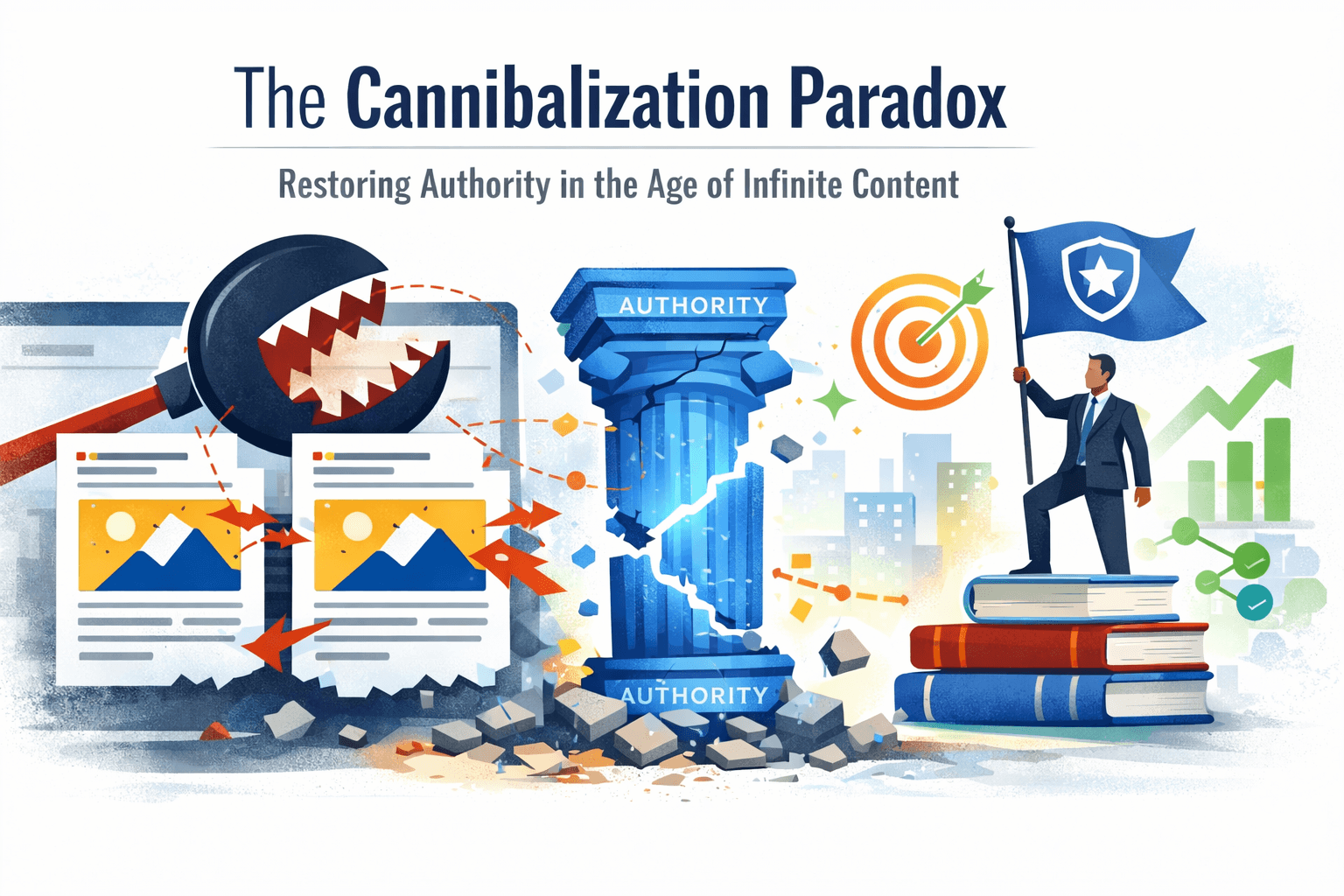

This assumption has catastrophic flaws in the current era of semantic search. We have transitioned from an era of scarcity, where information was the limiting factor, to an era of overwhelming abundance, particularly exacerbated by the generative capabilities of Artificial Intelligence. In this new reality, the primary threat to a website’s organic performance is often not the external competitor, but the website itself. This phenomenon is known as Content Cannibalization.

Content cannibalization is not merely a technical error or a keyword conflict; it is a fundamental strategic failure where a domain competes against itself, fracturing its authority and forcing search engines to choose between multiple imperfect options rather than presenting a single, authoritative result.1 It occurs when multiple pages on a single website target the same keyword or, more accurately, the same search intent. This forces search engines like Google to split relevance signals—such as backlinks, click-through rates (CTR), and engagement metrics—across these pages rather than concentrating them on one strong URL. The result is fragmented rankings, reduced visibility, and a digital footprint that is wide but perilously shallow.

1.1 The Semantic Shift: From Strings to Things

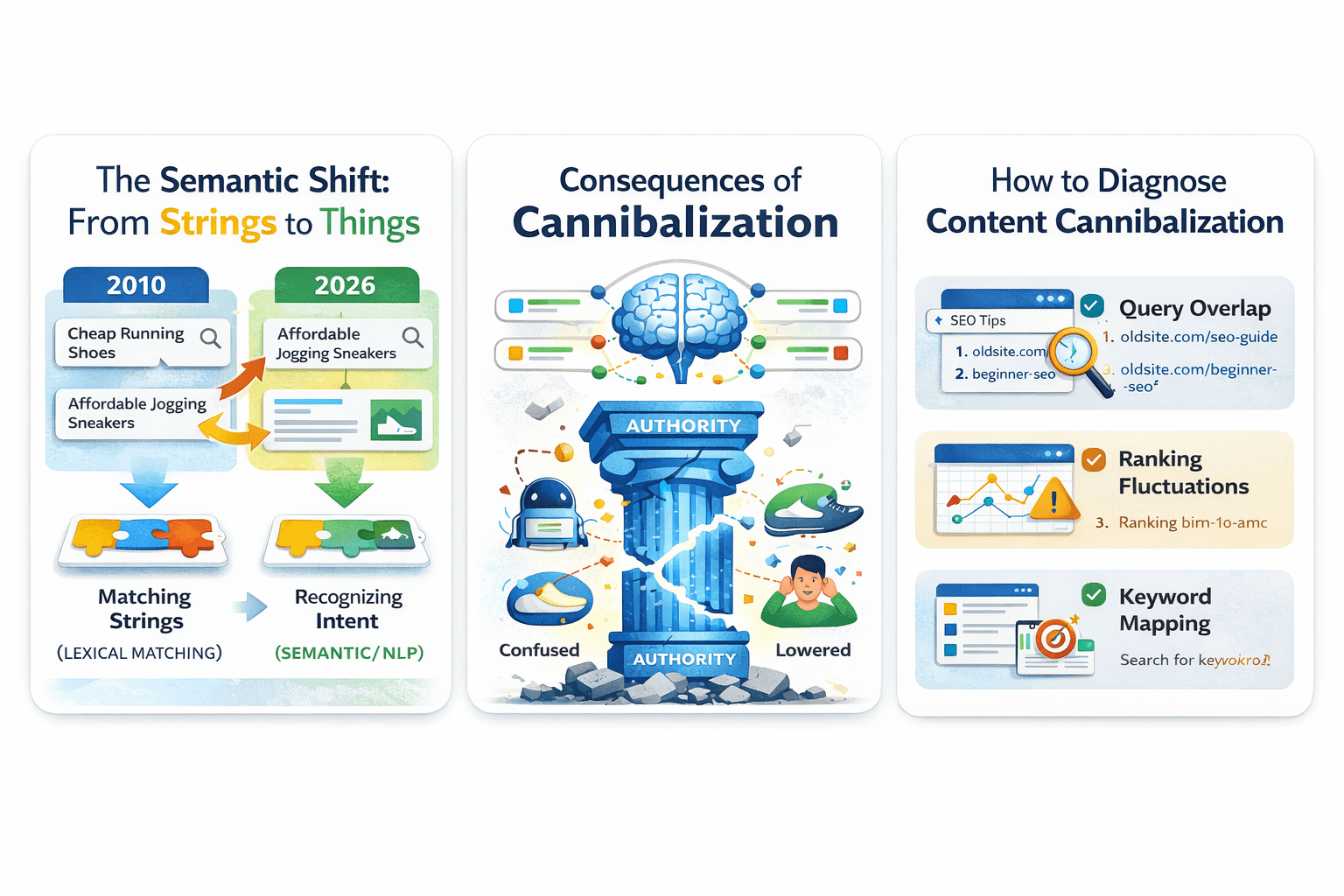

To understand why cannibalization is so destructive in 2026, one must understand the evolution of the underlying search algorithms. In the early days of SEO (circa 2010), search engines were primarily lexical. They matched strings of text. If you had a page about "cheap running shoes" and another about "affordable jogging sneakers," a primitive engine might view them as distinct entities because the character strings differed. In that environment, creating multiple pages to capture specific keyword variations was a viable, albeit spammy, strategy.

Today, engines utilize sophisticated technologies like Vector Embeddings and transformer models (like BERT and MUM) to understand the semantic relationship between words. "Cheap" and "affordable" are mapped to similar vector spaces; "running" and "jogging" are recognized as synonymous intents. When a website publishes separate articles for these terms, the search engine sees two pages attempting to answer the exact same user question.

This semantic understanding means that the definition of cannibalization has expanded. It is no longer just about keyword duplication; it is about Intent Overlap. If you have a blog post titled "Top 10 Marketing Strategies" and a service page titled "Marketing Strategy Services," and both attempt to educate the user on the basics of strategy, they are in conflict. The search engine, unable to distinguish which page is the canonical source of truth for the query "marketing strategy," may oscillate between them, ranking neither effectively.

1.2 The Economic Consequence of Split Authority

The implications of this internal competition are measurable in lost revenue and wasted resources. When authority is split, several key performance indicators degrade:

| Metric | Impact of Cannibalization | Mechanism of Failure |

|---|---|---|

| Link Equity | Diluted | External sites link to different URLs, preventing any single page from accumulating enough authority to rank for competitive terms. |

| Crawl Budget | Wasted | Search bots spend resources indexing redundant pages rather than discovering new or updated content. |

| User Experience | Confused | Users encounter inconsistent information or land on low-value blog posts instead of high-converting product pages. |

| Conversion Rate | Lowered | Informational content often outranks transactional pages, leading users to "read" rather than "buy". |

The most insidious effect is the "wrong page ranking" problem. Consider a company offering seo services. They likely have a core landing page optimized for conversion. However, if they have also published five blog posts discussing "SEO for Startups" in a generic fashion, Google might rank one of the blog posts instead of the service page. The user lands on an article, reads a few paragraphs, and leaves. The traffic metric looks healthy, but the lead generation pipeline dries up because the high-intent traffic is being shunted to a low-intent asset.

1.3 Distinguishing the Diagnosis

Before a cure can be administered, the pathology must be accurately identified. Content cannibalization is often confused with other SEO issues, yet the remedies for each are distinct.

Cannibalization vs. Duplication Duplication involves identical or near-identical text appearing on multiple URLs—often a technical issue caused by CMS parameters (e.g., ?sort=price vs. ?sort=new). Search engines usually filter these out automatically or via canonical tags. Cannibalization, however, involves unique content. You might have written two completely different, high-quality articles on the same topic. Both are original, both are valuable, but they serve the same purpose. This makes cannibalization harder to detect because the content itself passes quality checks.

Cannibalization vs. Plagiarism Plagiarism is the theft of content from external domains. It is an ethical and legal violation that results in severe penalties or manual actions. Cannibalization is an internal domain issue. It is self-inflicted competition. While plagiarism is like stealing from a neighbor, cannibalization is like your left hand fighting your right hand for the same fork.

Cannibalization vs. Keyword Stuffing Keyword stuffing is the overuse of a term on a single page to manipulate rankings. Cannibalization is the spreading of a term across too many pages. The former is a density issue; the latter is an architectural issue. Stuffing makes a page look spammy to a user; cannibalization makes a site look confused to a bot.

Chapter 2: The Mechanics of Internal Conflict

To resolve cannibalization, we must move beyond the surface-level symptoms and understand the algorithmic mechanics that drive it. Search engines operate on a system of signals. Every page on the web emits a complex array of signals—content relevance, backlink authority, user behavior (dwell time, bounce rate), and internal link structures.

2.1 The Signal Dilution Effect

When multiple pages target the same intent, these signals are diluted. Imagine you have 100 "authority points" (a simplified metaphor for PageRank or link equity) available for the topic of "Technical Audits."

- Scenario A (Consolidated): You have one comprehensive guide. It receives all 10 backlinks from external industry blogs. It receives all internal links from your navigation. It accumulates 100 points. It ranks #1.

- Scenario B (Cannibalized): You have four separate articles: "Audit Checklist," "How to Audit," "Audit Tools," and "Audit Guide." The external backlinks are scattered—three links go to the checklist, two to the guide, five to the tools page. Internally, your blog links inconsistently to all four. Each page ends up with 20-30 points. None of them have the threshold authority to break into the top 10 results.

This dilution is the primary mechanical failure mode of cannibalization. It explains why consolidating content often leads to immediate ranking jumps—you are effectively pooling your "authority points" into a single vessel.

2.2 The Rotation Phenomenon

One of the most reliable diagnostic signs of cannibalization is ranking instability, often called "URL Rotation." This occurs when Google cannot decide which page is the best answer.

- Week 1: Page A ranks at position 6.

- Week 2: Page B ranks at position 9; Page A disappears.

- Week 3: Page A returns at position 12.

- Week 4: Both pages appear at positions 20 and 21.

This oscillation is a clear indicator that the algorithm is testing different hypotheses. It is trying to determine which page satisfies the user. The fluctuation itself is harmful because it prevents either page from building the long-term user behavior data (like consistent click-through rates) that solidifies top rankings.

2.3 Page Depth and Architectural Hierarchies

A frequently overlooked contributor to cannibalization is page depth in seo. This refers to the number of clicks it takes to reach a specific page from the homepage. Search engines use page depth as a proxy for importance. A page one click away from the homepage (Depth 1) is assumed to be more critical than a page five clicks deep (Depth 5).

Cannibalization often occurs when a low-value blog post is architecturally "closer" to the surface than a high-value service page.

- The Conflict: A "Services" page might be buried under Home > Services > Consultancy > Enterprise (Depth 3). Meanwhile, a new blog post is linked directly from the homepage's "Recent Posts" widget (Depth 1).

- The Result: A crawler sees the blog post as more important due to its proximity to the root domain. It prioritizes the blog post for the target keyword, cannibalizing the deeper, more valuable service page.

Understanding the distinction between Page Depth (clicks from home) and Crawl Depth (how easily a bot finds the page) is vital here. A page might be deep in the site structure but have high crawl priority if it has many internal links. Mismanaging these depths creates signals that contradict your strategic goals.4

Chapter 3: The AI Factor – The Hidden Threat of 2025

The emergence of Generative AI has transformed cannibalization from a management nuisance into an existential threat. As we moved through 2024 and into 2025, the barrier to content creation dropped to near zero. This absence of friction allowed for the proliferation of what industry analysts call the "Infinite Content Loop".

3.1 The Infinite Content Loop

The "Infinite Content Loop" describes a recursive cycle where AI models, trained on existing web content, generate new content that is semantically identical to the source material. This content is then published, indexed, and potentially scraped again to train future models.

For an individual website, this manifests as Internal AI Overproduction. A content team, using tools like ChatGPT or Jasper without strict governance, might generate dozens of articles based on keyword lists. The AI, lacking the strategic context of the site's existing library, treats each keyword as a unique prompt.

- Prompt 1: "Write an article about LSI Keywords."

- Prompt 2: "Write a guide on Latent Semantic Indexing."

- Result: The AI produces two articles that are 90% overlapping in substance. The site publishes both. The site is now cannibalizing itself at scale.

This creates a "bloat" where the ratio of unique, valuable pages to total pages plummets. Google's "Helpful Content" systems are designed to detect this lack of information gain. A site with 1,000 pages of repetitive AI drivel will see its site-wide authority suppressed, dragging down even the high-quality human-written pages.

3.2 External AI Scraping and Theft

A more hostile form of cannibalization involves external AI agents. Scrapers feed your high-ranking content into Large Language Models (LLMs) to rewrite it. These "spun" versions are then published on competitor sites or "Made for Advertising" (MFA) farms.

- The Mechanism: The AI rewrites the sentence structure and swaps synonyms, bypassing traditional plagiarism detectors (like Copyscape) and simple duplicate content filters.

- The Threat: To a search engine, this looks like a "new perspective" on the topic. If the scraper site has higher domain authority (or uses manipulated expired domains), the stolen, rewritten content can outrank the original source. This is AI Content Cannibalization—the theft of semantic authority.

3.3 Protective Measures for Original Content

In this environment, defense becomes as important as creation. Protecting original content from AI cannibalization requires technical and strategic barriers.

- Honeytrap Pages: Advanced webmasters are deploying "honeytrap" pages—hidden links in the site structure that are disallowed in robots.txt for legitimate crawlers but accessible to scrapers. If an IP address accesses these traps, it is immediately flagged and blocked firewall-wide.

- Crawl Delay: Implementing crawl delay directives for non-essential bots can make mass scraping computationally expensive for the attacker, acting as a soft deterrent.

- Proprietary Assets (The "Unspinnable"): The ultimate defense is to publish content that AI cannot easily spin. This includes:

- Original Data Tables: Hard-coded data from proprietary surveys or internal logs.

- Interactive Tools: Calculators or configurators that require server-side logic.

- Coining Terms: Introducing unique nomenclature (e.g., "The Skyscraper Technique" or "The Cannibalization Paradox"). When AI rewrites the content, it must either use your term (attributing authority to you) or lose the meaning. If you coin the term, you own the keyword.

Chapter 4: Diagnosing the Invisible

Because cannibalization is often a silent killer—manifesting as stagnation rather than a sudden drop—diagnosis requires a proactive, forensic approach. It involves triangulating data from manual searches, Google Search Console (GSC), and crawler logic.

4.1 Manual Triage: The site: Operator

The most accessible tool for immediate diagnosis is Google's own search operators. This method is qualitative but highly effective for spotting obvious overlaps.

- The Protocol: Perform a search using site:yourdomain.com "target topic".

- Analysis: Examine the number of results. If you search site:traficxo.com "SEO audit" and see 40 pages returned, you likely have a problem. Scrutinize the titles. Are "SEO Audit Guide," "How to Do an Audit," "Audit Checklist," and "Technical SEO Audit Steps" all present?

- The Insight: If the titles and meta descriptions are virtually interchangeable, users (and bots) will struggle to choose. This visual confirmation is often enough to justify a deeper audit.

4.2 Quantitative Forensics with Google Search Console

Google Search Console (GSC) provides the source of truth for how Google handles your pages. It reveals the actual ranking behavior, including the damaging rotation phenomena.

The GSC Audit Protocol:

- Select a High-Impact Keyword: Navigate to the "Performance" report and filter by a query that is underperforming (e.g., high impressions, low clicks, or stuck on page 2).

- Drill Down to Pages: Click on the query to isolate its data, then switch to the "PAGES" tab.

- Interpret the Distribution:

- Healthy Signal: One URL receives >90% of the impressions.

- Cannibalized Signal: Two or more URLs split the impressions (e.g., 60% / 40% or 30% / 30% / 40%). This split indicates that Google is unsure which page is relevant.

- Analyze the "Average Position" Graph: Look for volatility. If Page A ranks at position 10 on Monday and Page B ranks at position 12 on Tuesday, the graph will show a "sawtooth" pattern. This lack of stability destroys click-through rates because users rarely see the same result twice.

4.3 Matrix Analysis for Enterprise Sites

For sites with thousands of pages, manual checking is unscalable. Matrix analysis using spreadsheet exports is required.

- The Setup: Export full GSC performance data (Query and Page dimensions).

- The Pivot: Create a pivot table where:

- Rows = Query

- Values = Count of Unique URLs (Pages)

- The Filter: Filter the pivot table to show only queries where "Count of Unique URLs" is greater than 1.

- The Prioritization: Sort by "Impressions." A cannibalization issue on a query with 10 impressions is irrelevant. A conflict on a query with 50,000 impressions is a revenue emergency. This method allows you to identify the "bleeding" arteries of the site immediately.

4.4 Intent Mapping Validation

Tools like technical SEO frameworks go a step further by mapping keywords to intent.

- Intent Mismatch: Sometimes GSC shows two pages ranking, but one is a PDF and one is a product page. This might not be traditional cannibalization, but it indicates a failure to serve the correct format. If the PDF is outranking the product page for a transactional query ("buy widgets"), the site is losing money. This requires diagnosing why the product page is failing to satisfy the algorithm (e.g., thin content, slow load times) rather than just merging the pages.

Chapter 5: The Architecture of Authority

Prevention is superior to remediation. The most effective defense against cannibalization is a robust information architecture (IA). This involves moving away from flat, blog-centric structures toward hierarchical, clustered architectures.

5.1 The "One Intent, One URL" Doctrine

Modern content governance must adhere to a strict doctrine: One Intent, One URL. Every page on the site must have a unique reason for existing that does not overlap with another.

- Informational: "What is X?" (The definition)

- Commercial Investigation: "Best X Tools" (The comparison)

- Transactional: "Buy X Service" (The solution)

- Navigational: "Login to X" (The utility)

Before a writer creates a new piece of content, they must consult the "Intent Map." If the intent is "explain how to clean a cast iron skillet," and a page already exists titled "Cast Iron Cleaning Guide," a new article titled "10 Tips for Pan Cleaning" should be rejected. Instead, the existing guide should be updated. This discipline prevents the "rot" of redundancy from setting in.

5.2 Topic Clusters and Pillar Content

The Topic Cluster model is the structural antidote to cannibalization. It organizes content into a hub-and-spoke model.

- The Pillar Page (Hub): A broad, authoritative guide covering a high-level topic (e.g., "Digital Marketing"). It touches on all aspects but links out for details.

- The Cluster Pages (Spokes): Specific articles covering sub-topics (e.g., "SEO," "PPC," "Email Marketing").

- The Link Logic: All cluster pages link back to the Pillar. Crucially, they link only to the Pillar for the broad term. A post about "SEO Tips" should link to the "Digital Marketing" pillar with the anchor text "Digital Marketing." It should not try to rank for "Digital Marketing" itself. This structure creates a clear hierarchy for the search bots. It tells them: "These satellite pages are experts in their niche, but the Pillar is the authority on the broad topic." This prevents the spokes from cannibalizing the hub.

5.3 Semantic Distancing with LSI Keywords

When topics are naturally close (e.g., "SEO Services" vs. "SEO Consulting"), we must use LSI keywords (Latent Semantic Indexing) to artificially create distance between them.

- Strategy: For the "Services" page, focus on transactional LSI terms: packages, pricing, agency, deliverables, contract.

- Strategy: For the "Consulting" page, focus on advisory LSI terms: strategy, audit, roadmap, expert opinion, mentorship. By shifting the semantic cloud of each page, we help the vector algorithms distinguish between the "Do it for me" intent (Services) and the "Tell me how to do it" intent (Consulting).

Chapter 6: Remediation Protocols – The Surgery

Once cannibalization is identified, the cleanup process—often referred to as "Content Pruning" or "Remediation"—begins. This is surgical work. One wrong move (like deleting a page with high backlinks without a redirect) can cause permanent damage. We use a standardized decision matrix: Merge, Redirect, Pivot, or Delete.

6.1 Protocol A: The Consolidation (Merge & Redirect)

This is the gold standard for pages that serve the exact same intent.

- The Scenario: You have three posts: "SEO Tips 2022," "SEO Tips 2023," and "Best SEO Advice."

- The Analysis: All three target the "informational" intent for "SEO tips." Splitting them dilutes the links.

- The Execution:

- Select the Survivor: Identify the strongest URL (highest backlinks, best traffic, or cleanest URL slug). Let's say it's "Best SEO Advice."

- Harvest the Value: specific paragraphs, unique data points, or images from the "2022" and "2023" posts and integrate them into the Survivor page. Make it the ultimate resource.

- The 301 Redirect: Implement server-side 301 redirects from the two old URLs to the Survivor URL.

- The Outcome: Link equity from three sources is combined into one. Users get a better, more comprehensive guide. Google sees a clear authority signal. Rankings typically improve within 2-4 weeks.

6.2 Protocol B: The Pivot (Differentiation)

Use this when both pages are valuable but currently overlap due to lazy targeting.

- The Scenario: A blog post "Email Marketing Guide" is outranking a product page "Email Marketing Software" for the term "Email Marketing."

- The Execution: You cannot merge them because one is a product and one is a blog. You must "pivot" the blog post.

- Rename: Change the blog title to "Email Marketing Strategy & Examples."

- De-optimize: Remove the broad term "Software" from the blog post's headers and meta data.

- Link: Add a prominent internal link from the blog post to the product page with the anchor text "Email Marketing Software."

- Intent Shift: Rewrite the intro of the blog to focus purely on education, explicitly stating that if the user wants tools, they should check the product page. This clarifies the roles of the two pages. The blog becomes the "top of funnel" entry point that funnels traffic to the "bottom of funnel" product page.

6.3 Protocol C: The Canonical Tag (The Soft Fix)

This is reserved for technical duplicates where pages must remain accessible but should not be indexed separately (e.g., a specific landing page for a PPC campaign that is a duplicate of a generic page).

- The Execution: Add <link rel="canonical" href="https://site.com/master-page" /> to the header of the duplicate page.

- The Risk: This is a "hint," not a directive. Google may ignore it if the content is too different. It does not consolidate link equity as efficiently as a 301 redirect. It is a secondary option, not a primary one.

6.4 Protocol D: The Deletion (Pruning)

For pages that provide no value—old press releases, "thin" content (under 300 words), or low-quality AI drafts—deletion is the healthy choice.

- The Execution: If the page has no backlinks and no traffic, simply delete it and serve a 410 Gone status. This tells Google "this is gone forever, remove it from the index immediately." If it has backlinks, 301 redirect it to the nearest relevant category page to salvage the link equity.

Chapter 7: Case Studies – Proof of Concept

The theory of cannibalization is robust, but the application is where results are proven. Recent industry case studies from 2024 and 2025 demonstrate the ROI of resolving these conflicts.

7.1 The SaaS Growth Engine: Planable

Planable, a social media collaboration tool, faced stagnation despite publishing high volumes of content. An audit revealed significant overlap between their glossary pages, blog posts, and feature pages.

- The Action: They executed a massive consolidation project, merging thin glossary terms into comprehensive guides and redirecting competing blog posts.

- The Result: From July 2024 to January 2025, organic traffic increased by 176%. The consolidation didn't just stop the bleeding; it created "super-pages" that dominated the SERPs. The removal of internal competition allowed their domain authority to be applied efficiently rather than wasted.

7.2 The Luxury Turnaround: Huntsman

Huntsman, a heritage tailoring brand, struggled with legacy content after expanding to the US market. Decades of "news" updates and duplicate product descriptions had created a cannibalization mess.

- The Action: An agency performed a forensic audit, identifying thousands of toxic backlinks and internal conflicts. They merged content and set strict 301 redirects.

- The Result: Revenue from organic traffic increased by 170%. This highlights a critical point: resolving cannibalization is not just an SEO metric fix; it is a revenue driver. By ensuring the high-intent transactional pages ranked instead of low-intent news updates, they captured the user at the moment of purchase.

7.3 The Diagnostic Win: SmartClick

SmartClick agency identified a specific conflict for the keyword "SaaS SEO Consultant." Their service page was fighting their own blog post "How to Choose a Consultant."

- The Data: GSC showed the pages swapping positions constantly, never breaking the top 5.

- The Fix: They pivoted the blog post to focus purely on the "How-to" intent, while optimizing the service page for the "Best Agency" intent.

- The Result: The volatility ceased. The service page anchored itself in the top 3, while the blog post ranked for long-tail research queries, effectively capturing users at both stages of the funnel without conflict.

Chapter 8: Future-Proofing – Strategy for the Generative Era

As we look toward 2026 and beyond, the SEO environment will be dominated by Search Generative Experience (SGE) and AI Overviews. These systems synthesize answers from multiple sources. In this world, authority is binary: you are either a trusted source, or you are noise.

8.1 Predictive Intent Modeling

The future of preventing cannibalization lies in Predictive Intent Mapping. AI models will soon forecast what a user will search for next. If a user searches "SEO Basics," the AI anticipates a follow-up for "Keyword Research."

- The Strategy: Successful sites will architect their content to match this predictive chain. You must link your "Basics" page to your "Keyword" page with clear, logical connections. If you have broken links or cannibalized content in this chain, you break the "predictive flow," causing the AI to abandon your site as a source for the user's journey.

8.2 The "E-E-A-T" Firewall

To survive the flood of AI content, brands must double down on E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness). Google is explicitly training its quality raters and algorithms to value "first-hand experience."

- The Human Advantage: A blog post written by an AI can define "cannibalization." But only a human expert can say, "In my experience auditing a 10,000-page enterprise site, the biggest hurdle wasn't the data, it was convincing the CMO to delete 4,000 pages."

- The Tactic: Inject personal anecdotes, proprietary data, and contrarian opinions into every piece of content. This "human residue" is the signal that separates valuable content from the commodity slop produced by LLMs.

8.3 The Traficxo Standard

At Traficxo, the approach to SEO—whether it is startup seo or enterprise consulting—centers on this philosophy of architectural purity. We do not believe in "more content." We believe in "better architecture."

The standard operating procedure for 2026 involves:

- Quarterly Intent Audits: Using vector analysis to spot drifting content.

- Ruthless Pruning: If a page hasn't generated traffic in 12 months, it is merged or deleted.

- Strict Governance: No content is commissioned without an "Intent Check" against the existing library.

- Technical Rigor: Ensuring technical seo audit are not just checklists, but strategic realignments of the site's skeleton.

Conclusion: The Discipline of Less

Content cannibalization is the natural entropy of a growing website. Without energy applied to the system (maintenance), order degrades into chaos. The "more is better" mindset that fueled the content marketing boom of the 2010s is now a liability. In the age of AI, where content is infinite and free, curation and authority are the scarce, valuable assets.

Fixing cannibalization is more than a cleanup job; it is a strategic pivot. It requires the courage to delete, the discipline to merge, and the foresight to plan. It means accepting that one incredible page is worth more than fifty mediocre ones. For the digital leaders of 2026, the path to growth is counter-intuitive: to grow bigger, you often need to become smaller, tighter, and more focused. By eliminating the internal noise, you allow your true signal to be heard by both the algorithms and, more importantly, your customers.

Ready to Scale Your Brand?

Transform your digital presence into a revenue-generating machine. Our expert team combines high-impact SEO, targeted paid ads, and creative video storytelling to drive real growth for your business.

Explore Our SEO Services